Why Agent Loops Are the Most Important New Work Primitive Since the Spreadsheet

This post is inspired by the episode, Autoresearch, Agent Loops and the Future of Work of the AI Daily Brief.

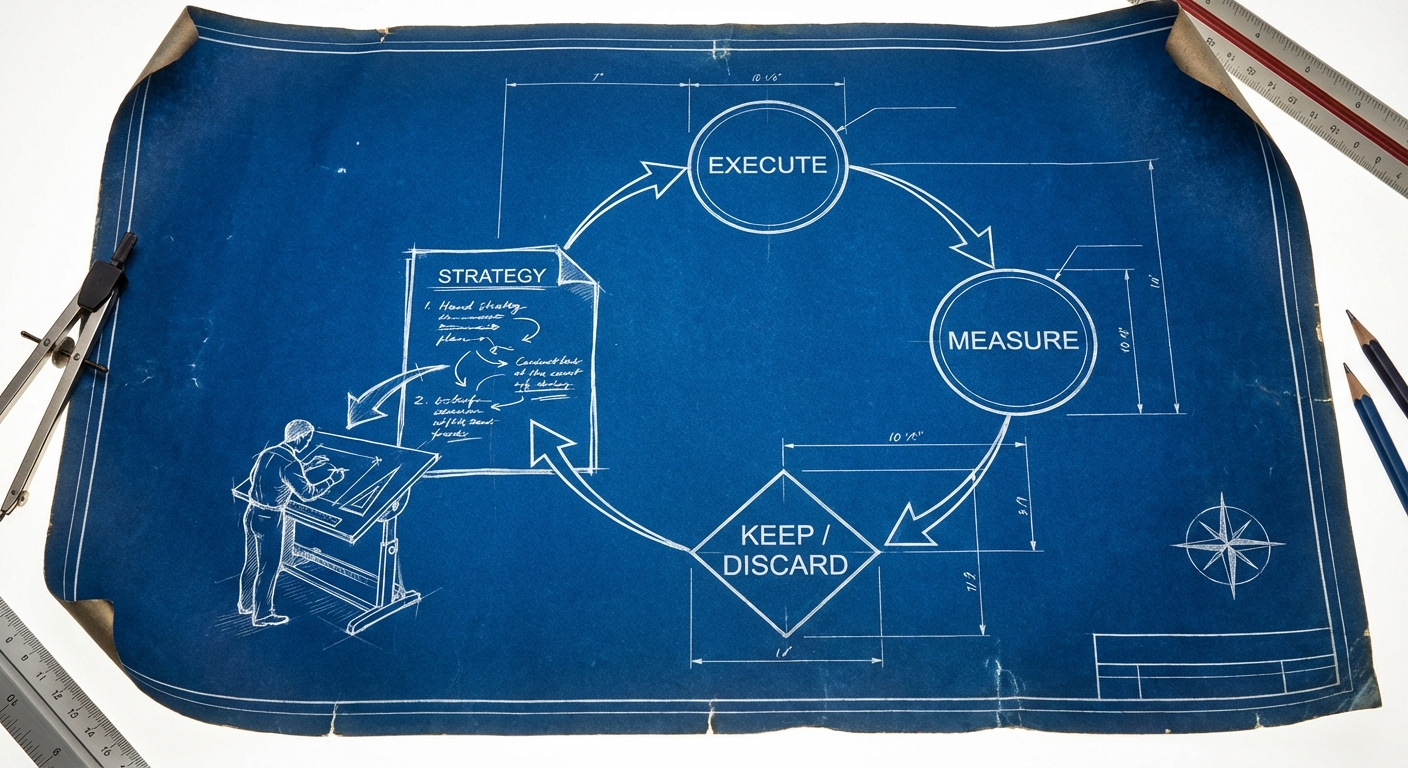

Andrej Karpathy released a project called auto research on a Saturday afternoon. By Sunday morning, it had run 83 experiments autonomously, kept 15 improvements, and driven measurable progress on a language model training benchmark. No human touched the code. The AI agent read a strategy document, made a change to the training script, ran a five-minute experiment, checked the score, kept or discarded the result, and looped. Eighty-three times. Overnight.

The conversation that followed focused, predictably, on ML research and on Karpathy himself. But the real significance of auto research has almost nothing to do with language model training. What Karpathy built is the clearest, most distilled example of a genuinely new work primitive: the iterative agent loop. Like spreadsheets, email, or slide decks before it, this primitive is about to cut across every function and role in every organization.

A work primitive is a basic building block so fundamental that it shows up everywhere. People reach for it automatically once they have it. New ones do not come along very often. The window where adopting them early creates outsized returns is open now. And it will not stay open indefinitely.

The Pattern, Not the Project

Auto research is a three-file system. One file handles fixed infrastructure: data preparation and evaluation. One file contains the model code that the AI agent is allowed to edit. And one file, written in plain English, contains the instructions for how the agent should behave as a researcher: what experiments to try, what to be cautious about, when to be bold.

The human writes the strategy document. The agent executes within the frame. A single unambiguous number (validation bits per byte, in this case) tells the system whether things got better or worse. Improvements get committed. Failures get discarded. The loop repeats indefinitely.

This is not a new concept in ML research. What makes it significant is how cleanly the pattern generalizes. As Craig Hewitt put it:

The person who figures out how to apply this pattern to business problems, not just ML research, is going to build something massive. The code is almost irrelevant. The architecture and mindset is everything.

The pattern has three components: a human writes a strategy document, an agent executes experiments autonomously, and a clear metric decides what stays and what gets discarded. That structure does not require a GPU or a language model training loop. It requires a scoreable outcome and a fast iteration cycle.

The connection is not theoretical. This same loop pattern was already working in software development months earlier. Jeffrey Huntley, working from rural Australia, invented what he calls the Ralph Wigham technique: feed a prompt to a coding agent, whatever it produces, feed it back in, loop until it works. Y Combinator President Gary Tan connected the dots: "Auto research didn't emerge from nothing. The same pattern, put an AI in a loop with clear success metrics, was already working in software development by mid 2025."

Five Conditions That Make a Process Loop-Ready

Not every business process is a candidate for an agent loop. But many more are candidates than most organizations realize. The readiness criteria are specific:

The outcome must be scoreable. The loop needs to tell better from worse without asking a human. The more subjective the evaluation, the harder this becomes, though even subjective outcomes can be scored if you build the right evaluation function.

Iterations must be fast and cheap. Bad attempts should waste minutes, not months. If each experiment takes a week to evaluate, the loop loses its advantage over a human researcher.

The environment must be bounded. The agent needs a defined action space: which variables it can change, which files it can edit, which parameters are in scope.

The cost of a bad iteration must be low. You would not point this at live legal filings or production databases. The loop needs room to fail safely.

The agent must be able to leave traces. State lives in external artifacts (files, commits, progress logs), not in the agent's conversation history. Every new agent instance bootstraps its understanding from what previous agents left behind.

When you plot business processes against these five conditions, the map is more populated than most people expect. Code generation sits at the top: fast iterations, automated evaluation, bounded environment, low cost of failure. But the same quadrant includes ad bid optimization, A/B testing for copy and creative, supply chain routing, portfolio allocation backtesting, and resume screening.

The practical test is straightforward. Look at the work you do repeatedly where you already know what "better" looks like. If you could encapsulate that judgment clearly enough for an agent to use it as a score, you could point a loop at that part of your work.

The Human Job Becomes "Write a Better Memo"

The shift this primitive introduces is not about eliminating human work. It is about changing what the human work is.

In Karpathy's system, the researcher does not run experiments anymore. The researcher designs the arena the experiments live in. That means writing the strategy document: what to try, what to avoid, how to evaluate success. The human is, in Karpathy's words, "programming the program.md markdown file that provides context to the AI agents and sets up your autonomous research org."

Instead of the researcher running the research, they are designing the arena that the research lives in. The human job becomes write a better memo and the agent job is execute research within the frame.

This pattern scales beyond research. A product manager writes a PRD, kicks off a loop before dinner, and reviews the pull request in the morning. A sales rep writes targeting criteria and tone guidelines, points a loop at 200 leads overnight, and reviews the top 30 responses. A financial analyst defines constraints, loops through portfolio allocation backtests, and reviews the optimized output. A recruiter writes a scoring rubric, loops through 500 resumes, and reviews flagged edge cases.

The new high-value skills that emerge are arena design (creating the context the agent operates in), evaluator construction (building the scoring function that tells the agent what "good" actually is), and problem decomposition (breaking complex work into loop-sized chunks with measurable outcomes). These skills operate at a much higher level of abstraction than most work tasks today. And the organizations that develop them first will compound that advantage every night their loops run.

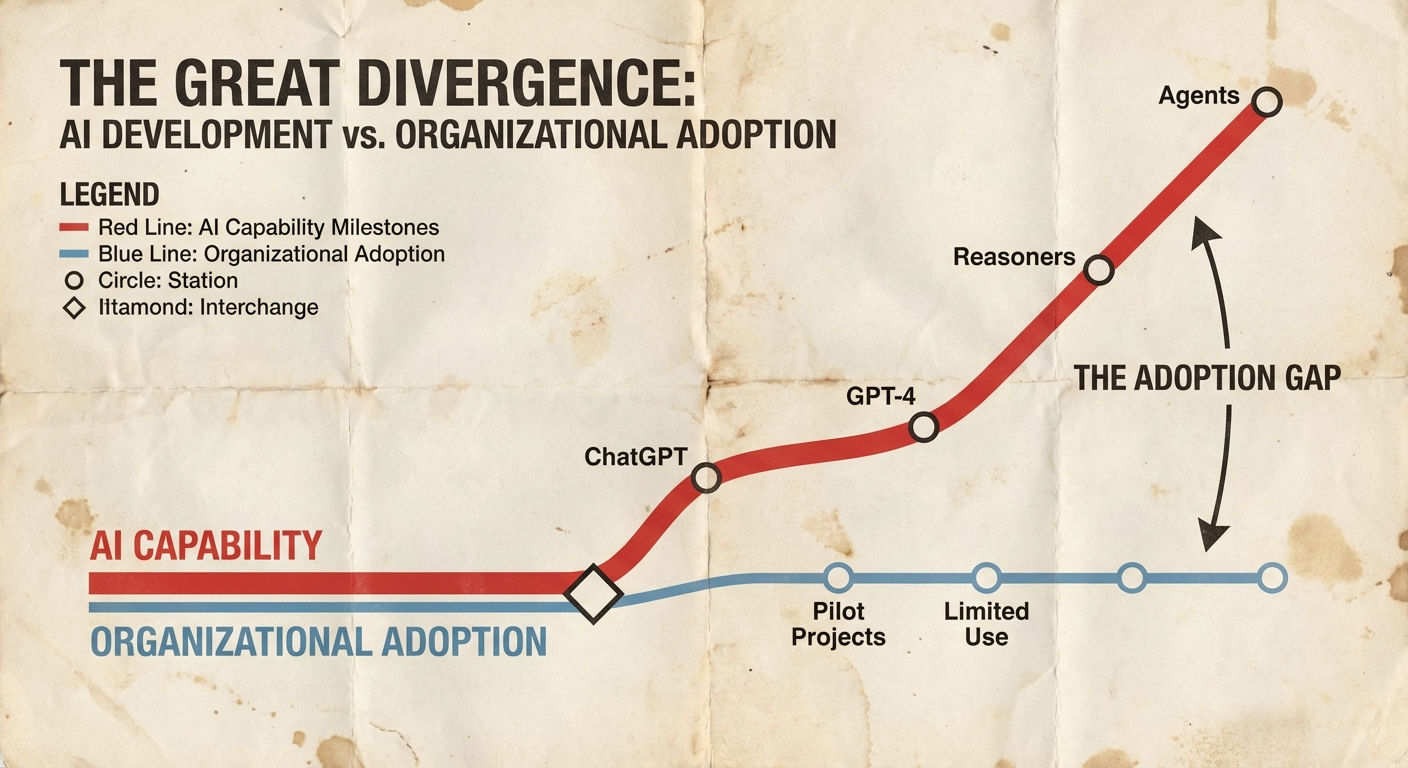

The Capability Overhang Is Widening

The gap between what AI can do and what organizations are actually doing grows every week. This is not a comfortable observation.

Every week, the capability overhang gets bigger. At some point, it is so wide that it almost becomes malfeasance to meet them where they are.

The overhang is not abstract. Consider: a marketing team that runs 30 experiments per year is now competing against one that runs 36,500. An engineering team that manually reviews every PR is competing against one where agent loops handle triage overnight. A sales organization that hand-crafts every outreach sequence is competing against one that tests hundreds of variations against live response data.

The tools to productize this pattern are already shipping. Claude Code now offers a /loop command that schedules recurring agentic tasks for up to three days. The infrastructure for persistent, looping agents is not theoretical. It is available today.

And yet, this is almost certainly not the end state. Karpathy himself notes that the next step is asynchronous, massively collaborative agent networks: not one agent looping, but swarms of agents contributing across arbitrary branch structures, sharing negative results efficiently, pruning the search tree collectively. The primitives are still evolving.

The question for every organization is not whether agent loops will affect their work. It is whether they can identify, right now, which of their processes have scoreable outcomes and fast iteration cycles, and start building the arenas for agents to work in. The organizations that answer that question first will compound advantages that are difficult to catch once established. The agent loop is a primitive. Primitives do not go away. They get absorbed into how everyone works, eventually. The window where adopting them early creates outsized returns is the window we are in right now.