AI Can Replace Entire Workflows. Most Organizations Haven't Changed a Thing.

This post is inspired by the episode, The Power To Shape AI of the AI Daily Brief. Here’s how it connects to Superintelligent:

- The Closing Window: Pain Points: AI Adoption, Change Management SI Connection: Strong Organizations figuring out AI right now are setting precedents for everyone else — but most are still in the early stages. SI helps organizations move from uncertainty to structured action before the window closes. Key Data Point: Despite exponential AI capability growth, remarkably little has changed in most organizations — but the window to shape how AI gets used may not last long.

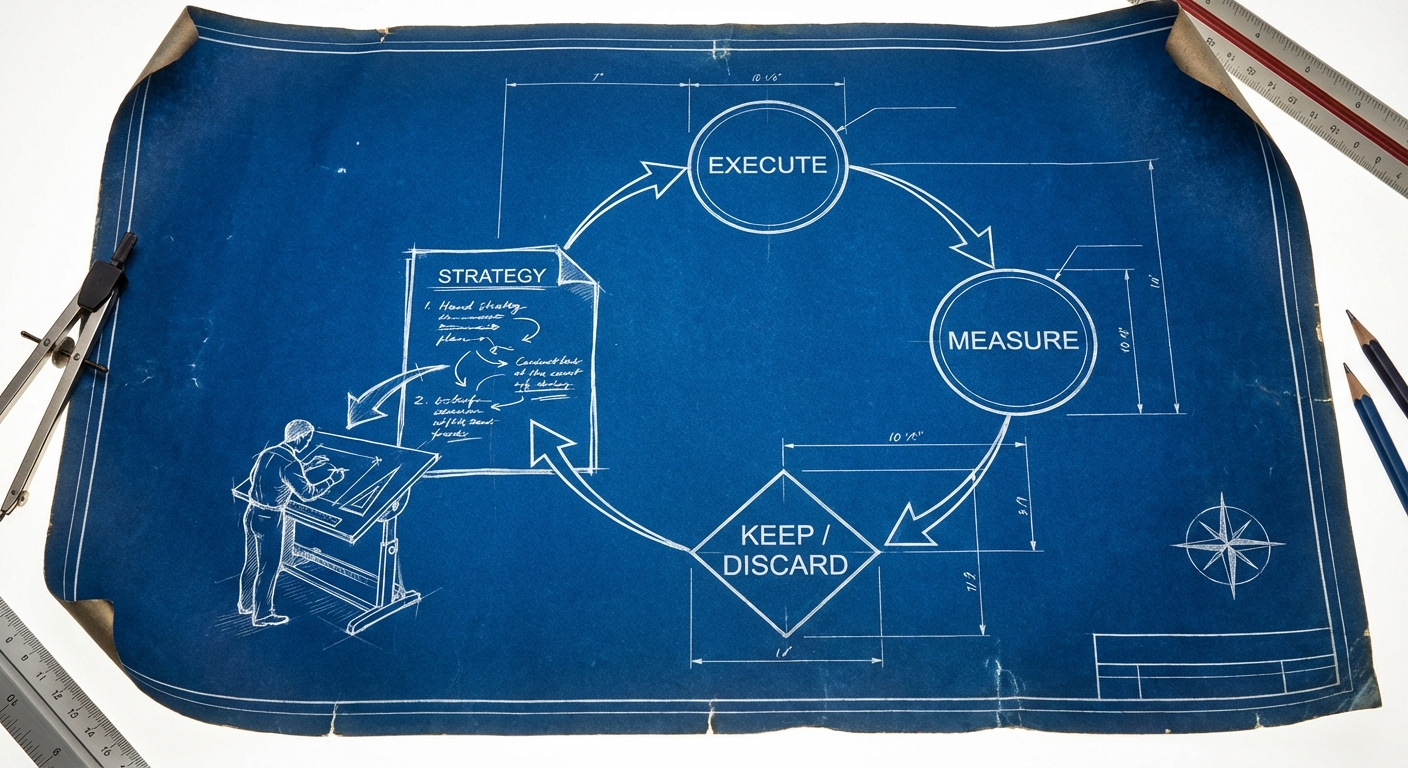

- Rolling Disruption is the New Normal: Pain Points: AI Adoption, ROI Measurement, Change Management SI Connection: Strong When disruption is unpredictable and rolling rather than one-time, organizations need a discovery process that keeps pace — not a one-time strategy deck. SI provides the structured, evidence-based view that cuts through the noise. Key Data Point: A single week in February saw a fictional AI crisis report tank stocks, a major company announce 40% layoffs blamed on AI (that weren't actually about AI), and a public fight between a major AI lab and the Pentagon.

- From Co-Intelligence to Managing AI: Pain Points: AI Adoption, Workforce Productivity SI Connection: Strong We've moved from prompting AI to managing AI agents — and most organizations haven't adapted their operating models. SI's discovery process identifies where this shift matters most for each organization and what organizational changes are needed. Key Data Point: Ethan Mollick identifies four big leaps in AI ability, with the fourth — workable agentic systems — arriving in late 2025, marking a shift from working with AI to managing AI.

The Capability Curve Left Everyone Behind

Ethan Mollick has been running the same AI image generation test since 2022: an otter on a plane using wifi. The progression from barely recognizable blobs to near-photographic accuracy was rapid enough. But in 2026, the same test now produces documentary-quality video, complete with animated otter expressions and narration, generated from a single text prompt.

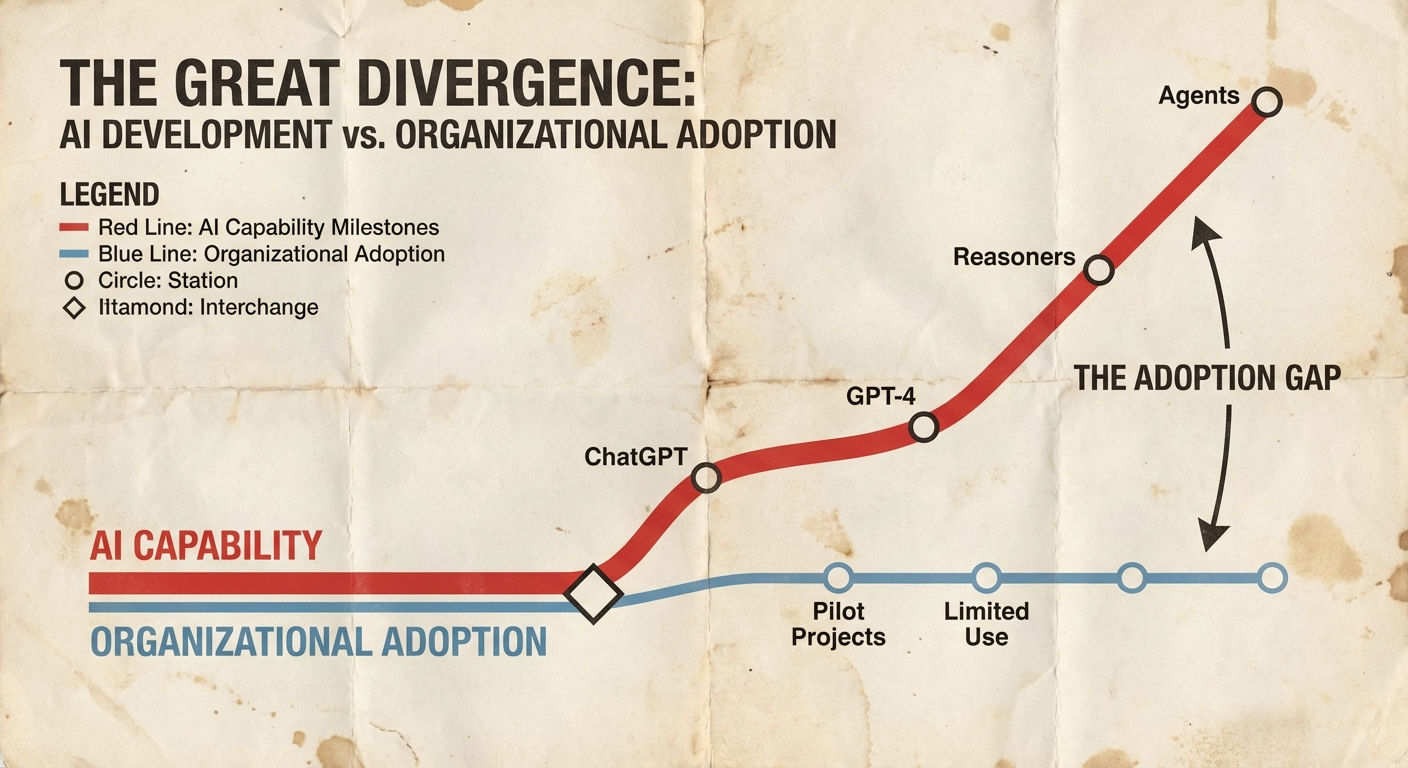

That visual progression mirrors a more consequential curve. The Meter evaluation, the most widely cited benchmark for measuring how much autonomous human-equivalent work an AI system can complete, shows the same exponential growth. So do virtually all other capability benchmarks. We are not in a period of gradual improvement. We are on a curve that is getting steeper.

And yet, as Mollick writes in his latest essay "The Shape of the Thing," the punchline lands with uncomfortable precision:

Despite these amazing capabilities and tests, companies are still very early in adopting AI. Meaning that as of yet, remarkably little has changed in most organizations.

That sentence deserves to sit with every executive for a few minutes. The technology can now manage entire agent loops, ship production software without a human touching the code, and run iterative research experiments overnight. The organizations using it, in most cases, have barely moved past the chatbot phase.

From Co-Intelligence to Managing AI

Mollick identifies four major leaps in AI capability from the user's perspective. The first was ChatGPT in November 2022. The second was GPT-4 in spring 2023. The third was reasoning models, starting with o1 but reaching full expression with o3 in spring 2025. The fourth, and the one that changes the game, is workable agentic systems arriving in late 2025: a capable reasoning model harnessed inside an agent framework that can be handed real work and trusted to complete it.

That fourth leap is qualitatively different from the first three. The earlier leaps improved what AI could do in a single turn, a single response, a single interaction. The fourth leap changed the relationship entirely. As Mollick puts it:

This is an era of managing AIs rather than working with them.

The distinction matters. Co-intelligence, the back-and-forth prompting that characterized 2023 and 2024, still required a human in the loop at every step. Agentic systems take a roadmap, a set of objectives, or a research question and run with it. The human role shifts from doing the work to designing the work to be done, reviewing the output, and deciding what happens next.

Consider what this looks like in practice. A three-person team at StrongDM built what they call a "software factory," a system where AI agents write, test, and ship production software with two explicit rules: code must not be written by humans, and code must not be reviewed by humans. Each engineer spends the equivalent of their salary on AI tokens, roughly a thousand dollars a day. The agents take product roadmaps written by humans, build the software, test it in simulated customer environments, loop feedback between coding and testing agents, and ship the results.

AI is good enough to change how organizations operate. The experimentation is just getting started, even as models continue to improve.

The specific details of StrongDM's approach matter less than what it signals. A year ago, this kind of radical experimentation was an interesting thought experiment. Today, it is running in production. The gap between what AI can theoretically do and what a team is actually doing with it has collapsed for a small number of early movers. The question every organization should be asking is not whether this approach is right for them. It is whether they even understand the landscape well enough to know which experiments are worth running.

Rolling Disruption Has Already Arrived

Mollick introduces a concept he calls "rolling disruption" to describe what the near future feels like. As AI capability crosses thresholds, it unlocks radical new use cases that change people's views, sometimes overnight, about what AI can do. Organizations experimenting with AI figure out how to make it work, leading to sudden announcements about new strategies or large-scale shifts in which kinds of employees companies value most.

He points to a single week in February 2026 as a preview. In the span of five days: a fictional AI crisis report from Citri Research tanked real stocks, Block announced 40% layoffs heavily implying AI as the cause (the reality was more complicated), and the Pentagon and Anthropic went to war over who controls how Claude gets used by the military.

As AI capability crosses thresholds, it unlocks radical new use cases that change people's views sometimes overnight about what AI can do.

None of those events were exactly what they appeared to be on the surface. The Citri report was fictional. The Block layoffs were not purely about AI. The Anthropic dispute involved layers of complexity that are still unfolding. But that is precisely the point. Rolling disruption does not arrive with clear labels. It arrives as a series of shocks that are difficult to interpret in real time, each one shifting the ground for the next.

There is a natural human response to this kind of uncertainty: freeze. And increasingly, there are voices amplifying that impulse rather than countering it. A new tracker called Job Loss AI bills itself as a real-time counter of AI-driven layoffs. It offers no policy recommendations, no framework for action, no suggestions for what individuals or organizations should do. It presents a number going up and asks people to be scared. That kind of awareness without agency is worse than useless. It creates learned helplessness: the feeling that something terrible is happening and there is nothing to be done about it.

The organizations that are moving right now, the ones running software factories and redesigning workflows around agent capabilities, are not paralyzed by the uncertainty. They are using it as a forcing function. When the rules have not been written yet, the organizations that show up to write them gain disproportionate influence over what comes next.

The Window Is Open. It Will Not Stay Open.

Mollick closes his essay with a line that should function as an alarm for any leader still in wait-and-see mode: "The window to shape the thing may not last long, but it is here now."

That window is the gap between exponential AI capability and near-flat organizational adoption. Right now, most organizations have not yet changed how they operate. The ones that move first, the ones that systematically identify where AI creates real impact across every function and restructure accordingly, set the precedent for everyone else. They define what good looks like. They build the institutional knowledge that compounds as the technology continues to accelerate.

Uncertainty is not the same as helplessness. When a technology is this powerful and this unsettled, the choices that individuals and organizations make right now matter more.

The organizations that close the gap between what AI can do and what their teams actually do with it will not just adapt faster. They will define the new operating model that everyone else eventually copies. The question is whether that definition happens through deliberate discovery, by mapping where AI works and where it does not across every department and workflow, or whether it happens by accident, driven by whatever vendor demo happened to impress the right executive.

Every week that passes without that structured understanding is a week where the capability curve gets steeper and the adoption line stays flat. The distance between the two is not just a metric. It is the measure of how much competitive ground an organization has left to recover.