The Architecture Mistake That Kills Most Enterprise AI Initiatives

This post is inspired by the episode, How to Use Agent Skills of the AI Daily Brief. Here’s how it connects to Superintelligent:

- Your AI Strategy Is Drowning in Context: Pain Points: AI Adoption, Vendor Consolidation, Automation SI Connection: Strong The skill architecture problem — agents that try to know everything perform worse — is the exact same problem organizations face. You can't jam every AI use case into one initiative. Structured discovery reveals which capabilities matter most and when to deploy them. Key Data Point: Anthropic found most skills fit into just 9 categories, but performance degrades when agents try to load all knowledge at once. Pull Quotes: - "As AI coding agents were getting more and more capable, people started to hit a very similar wall — system prompts kept ballooning. Every new capability meant more instructions, more examples, and more edge cases crammed into a single context window." - "Agents don't need access to all of their knowledge all the time. What they need is to be able to load the right knowledge at the right moment."

- The Gotcha Section Is Where the Real Value Lives: Pain Points: Training / Upskilling, AI Adoption, Change Management SI Connection: Strong Tariq argues the highest-signal content in any skill is the gotcha section — common failure points captured over time. For organizations, this is organizational learning about AI. Most companies repeat the same AI implementation mistakes because they never capture what went wrong. SI's discovery process surfaces these gotchas before they become expensive failures. Key Data Point: Anthropic's skill creator improved triggering accuracy 5 out of 6 times just by rewriting descriptions. Pull Quotes: - "The highest signal content in any skill is the gotcha section. These sections articulate common failure points that Claude runs into when using your skill." - "Most authors are subject matter experts, not engineers. They know their workflows but don't have the tools to tell whether a skill still works with a new model."

- From Ad-Hoc Prompting to Reusable Capabilities: Pain Points: Automation, Workforce Productivity, AI Adoption SI Connection: Strong The mental model shift from one-off prompts to repeatable skills is the same shift organizations need — from random AI experiments to systematic capability building. SI helps organizations identify which capabilities to build first and how to sequence them for maximum impact. Key Data Point: ClawHub has 28,000+ skills; Notion just launched custom skills for their AI — the pattern is converging across the entire stack. Pull Quotes: - "Write a prompt, you'll use it once. Write a skill, and you'll use it forever." - "AI is less and less a one-off conversation, and more and more a library of reliable, repeatable capabilities."

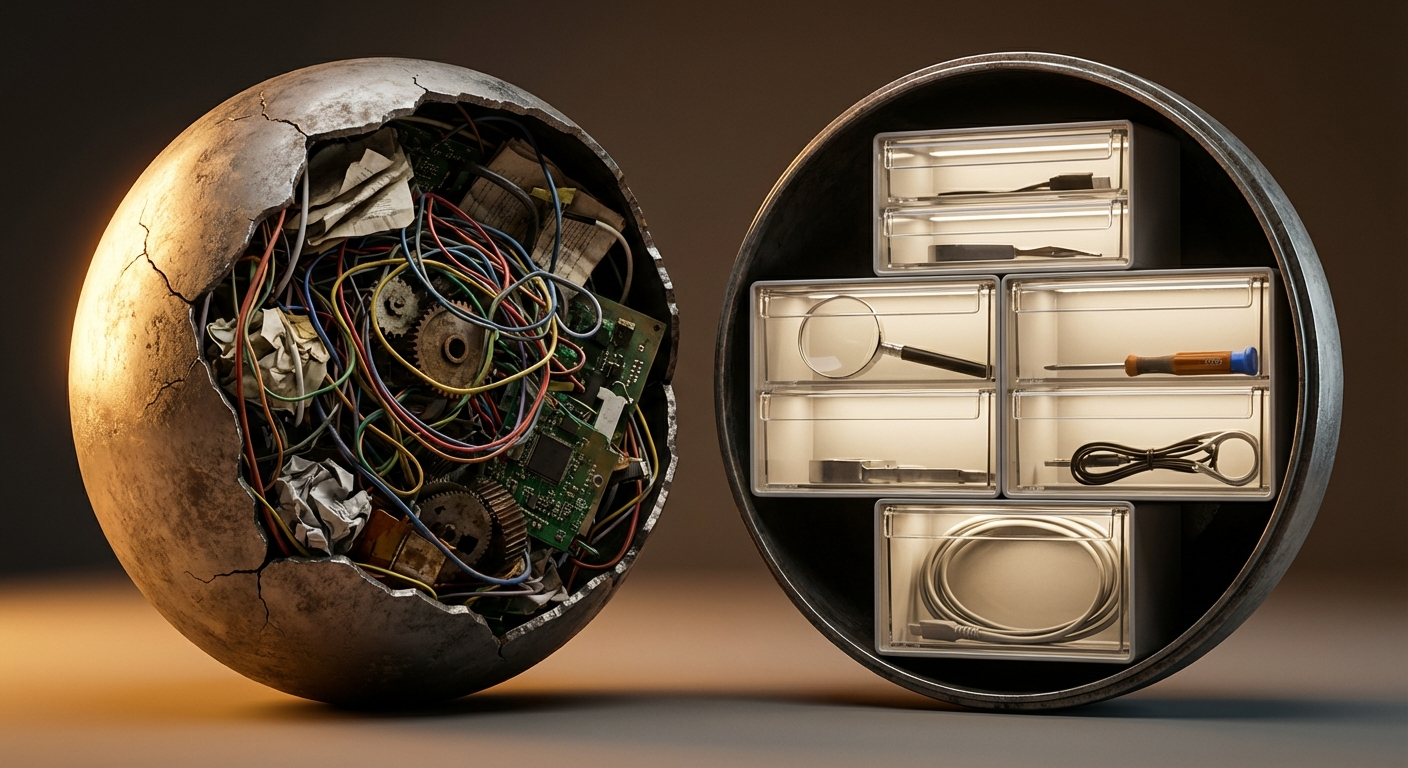

Anthropic's Claude Code team recently published something that looked, on the surface, like a niche technical post about agent skills. The team found that as AI coding agents grew more capable throughout 2025, users hit a predictable wall: system prompts kept ballooning. Every new capability meant more instructions, more examples, and more edge cases crammed into a single context window. The result was agents that got slower, more expensive, and less reliable.

The insight that fixed it was deceptively simple. Agents don't need access to all of their knowledge all the time. What they need is the ability to load the right knowledge at the right moment. Anthropic built a modular architecture around this principle and found that despite the thousands of skills now available across the ecosystem, most fit into just nine categories. The architecture problem was never about having too few capabilities. It was about trying to activate all of them simultaneously.

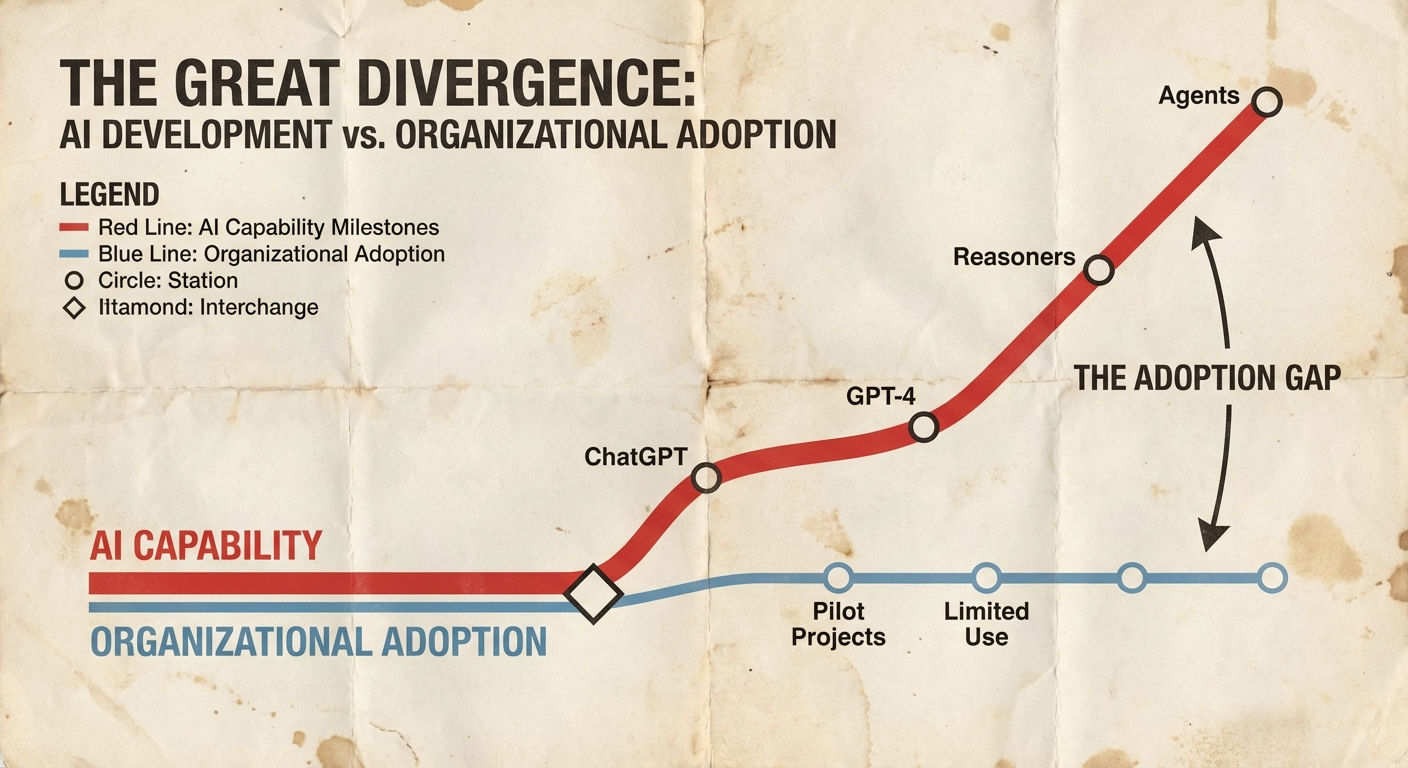

This is not just an agent architecture lesson. It is the exact pattern playing out inside organizations trying to deploy AI at scale. The enterprise that tries to jam every AI use case into a single initiative, a single team, a single strategy document, hits the same wall. Performance degrades. Costs balloon. Reliability drops. The fix is the same: modular, composable capabilities loaded at the right moment, not everything everywhere all at once.

The Context Overload Trap

The technical version of this problem is well documented. When you overload an AI agent's context window with too many instructions, the agent loses the ability to prioritize. It tries to satisfy every constraint at once and ends up satisfying none of them well. Anthropic's solution was progressive disclosure: give the agent just the description of available capabilities at the top level, let it decide what's relevant, and only then load the full instructions for the task at hand.

The organizational version is less documented but equally destructive. A typical enterprise AI initiative starts with a mandate: "Deploy AI across the organization." A team gets assembled. A vendor gets selected. A pilot gets launched. Then another pilot. Then a third. Each one adds requirements, stakeholders, compliance considerations, and integration demands to the same initiative. Within months, the strategy document looks like an overloaded system prompt. Dozens of use cases, each with different success criteria, different data requirements, different compliance profiles, all fighting for attention inside a single program.

As AI coding agents were getting more and more capable, people started to hit a very similar wall. System prompts kept ballooning. Every new capability meant more instructions, more examples, and more edge cases crammed into a single context window.

The quote describes an agent. It also describes most enterprise AI programs we have seen. The ones that stall are almost never short on ambition or budget. They are overloaded with scope. And the overload is invisible because each individual use case seems reasonable in isolation. The problem only becomes apparent when everything runs together.

From Random Experiments to Reusable Capabilities

Anthropic's architecture points to a different approach. Instead of one massive initiative, build discrete, modular capabilities that can be invoked independently. Their taxonomy of nine skill categories is instructive not because of the specific categories (library references, verification, business automation, code quality) but because of what the taxonomy represents: a shift from ad-hoc problem-solving to systematic capability building.

This pattern is converging across the entire AI stack. Notion just launched custom skills for their AI. ClawHub, a community repository, now hosts over 28,000 skills. The shift, as a recent episode of AI Daily Brief put it, is clear:

AI is less and less a one-off conversation, and more and more a library of reliable, repeatable capabilities.

For enterprises, the translation is direct. The organizations getting results from AI are not the ones with the most pilots. They are the ones that have identified which specific capabilities matter most for their business and built repeatable systems around those capabilities. The difference between a prompt you use once and a capability you use reliably every time is the difference between an experiment and an operational advantage.

This is where most AI strategies go wrong. They optimize for breadth of experimentation rather than depth of capability. Ten pilots across ten departments sounds impressive in a board deck. One well-built, well-verified capability that actually changes how a function operates is worth more than all ten combined. But organizations rarely know which one to build first, because they have not done the work of mapping where AI creates real, measurable impact across their specific workflows.

The Gotcha Section Is the Whole Game

Perhaps the most revealing insight from Anthropic's experience is what they call the "gotcha section." Every skill includes a section documenting common failure points, the specific ways the agent tends to break when using that capability. Tariq, who wrote the original post from the Claude Code team, argues this is the highest-signal content in any skill:

The highest signal content in any skill is the gotcha section. These sections articulate common failure points that Claude runs into when using your skill.

The gotcha section is organizational learning distilled into operational guidance. Every time the agent makes a mistake, you document it so the mistake does not recur. The skill becomes a living document that accumulates institutional knowledge about what works and what does not.

Now consider how most enterprises handle their AI failures. A pilot underperforms. The postmortem (if one happens) lives in a slide deck that circulates once and gets filed. The next team that attempts something similar starts from scratch, hits the same failure points, and files their own postmortem. The failure pattern repeats because the organization never captures what went wrong in a form that prevents recurrence.

This is not a technology problem. It is a discovery problem. Most companies repeat the same AI implementation mistakes because they never systematically surface what went wrong. They do not interview employees across functions to find where AI is already being used, what has worked, and what has not. They do not audit which tools deliver measurable returns and which ones quietly became shelfware. The gotcha sections of their AI story remain unwritten, which means every new initiative starts without the benefit of the organization's own experience.

What Modular Actually Looks Like

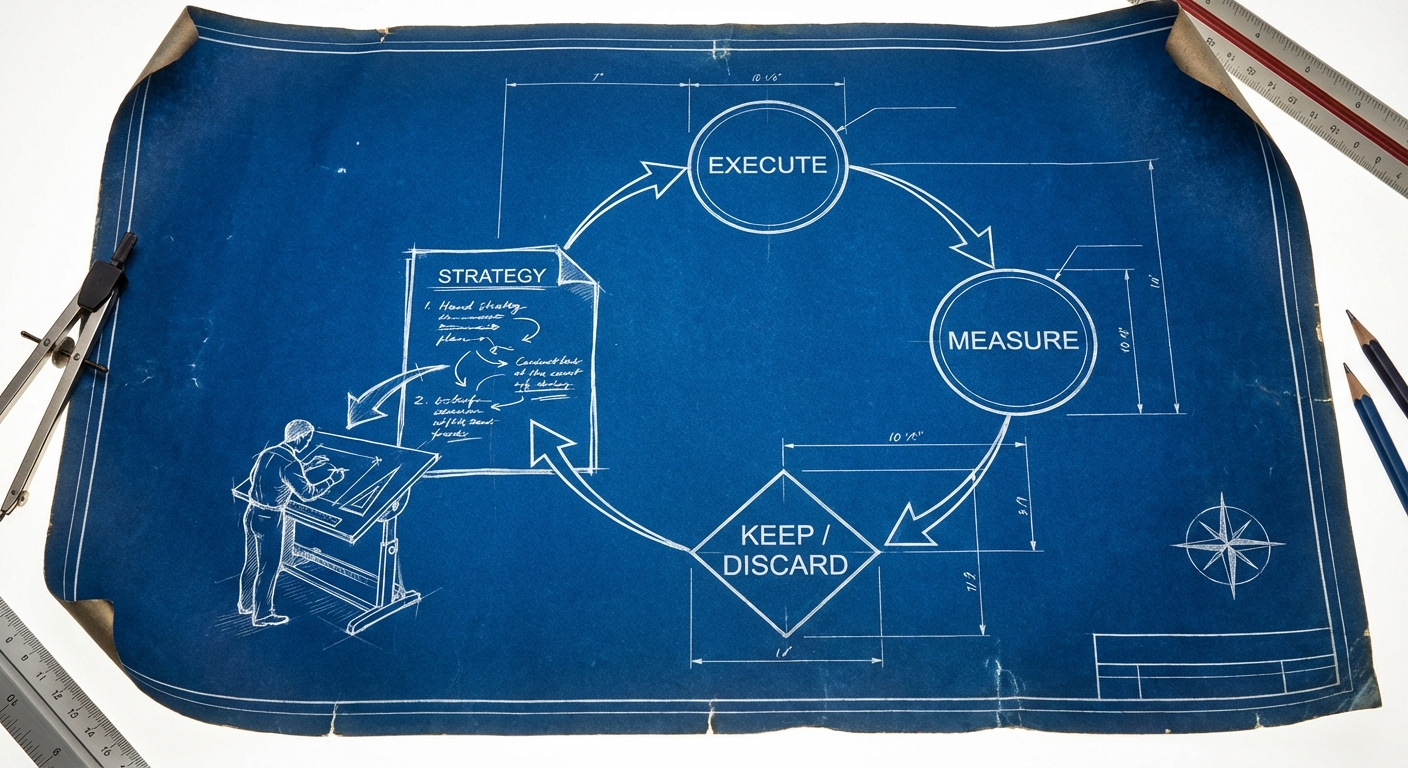

The skills architecture offers a practical model for how enterprises should structure their AI approach. The key principles translate directly.

First, progressive disclosure. Not every stakeholder needs to understand every AI initiative in full detail. Leadership needs the portfolio view: what capabilities exist, what each one does in a sentence, and which ones are delivering results. The teams operating each capability need the depth. Trying to maintain a single strategy document that serves both audiences is the organizational equivalent of cramming everything into one context window.

Second, composability. Individual capabilities should be able to operate independently while also combining into larger workflows. A verification skill in the agent world runs on its own but also plugs into a larger coding pipeline. An AI capability for contract review should work on its own but also integrate into a broader procurement workflow. If removing one capability breaks everything else, the architecture is too tightly coupled.

Third, continuous verification. Anthropic recommends investing a full engineering week in making verification skills excellent. The equivalent for enterprises is investing real time in measuring whether each AI capability actually delivers what it promises. Not once, at pilot completion, but continuously. Models change. Workflows evolve. A capability that worked in January may underperform by June if no one is checking.

The organizations that build this kind of modular, well-verified capability architecture tend to share one trait: they did the discovery work first. They mapped where AI creates impact before deciding what to build. They interviewed people across departments to understand which workflows benefit from AI and which do not. They built from evidence rather than assumption. The architecture follows naturally once you know what the components should be.

The most important signal in the skills story is not the technical architecture. It is the convergence. The same pattern, moving from ad-hoc prompting to structured, reusable capabilities, is appearing simultaneously at every layer of the stack. When a pattern converges across this many contexts simultaneously, it usually means something fundamental about how work is being restructured. The ad-hoc era of AI is ending. What replaces it is a world of composable, verifiable, reusable AI capabilities.

The enterprises that understand this shift have an advantage. Not because they adopted AI first, but because they know which capabilities to build, in what order, with what verification criteria. The context window lesson applies at every scale. Whether you are an agent processing a task or an organization deploying AI across a thousand employees, the answer is the same: load the right capability at the right moment. Everything else is noise.