Why Agent Platforms Win on Momentum, Not Just Technical Merit

This post is inspired by the episode, OpenClaw Goes to OpenAI of the AI Daily Brief. Here’s how it connects to Superintelligent:

- The Agent Shelling Point: Pain Points: AI Adoption, Automation SI Connection: Strong OpenClaw became the agent platform everyone gravitates to not because it is technically best, but because it created a Shelling point. Tool selection is as much about ecosystem momentum as raw capability. Key Data Point: OpenClaw passed VS Code in GitHub stars, 2x-ed PyTorch, 3x-ed Claude Code.

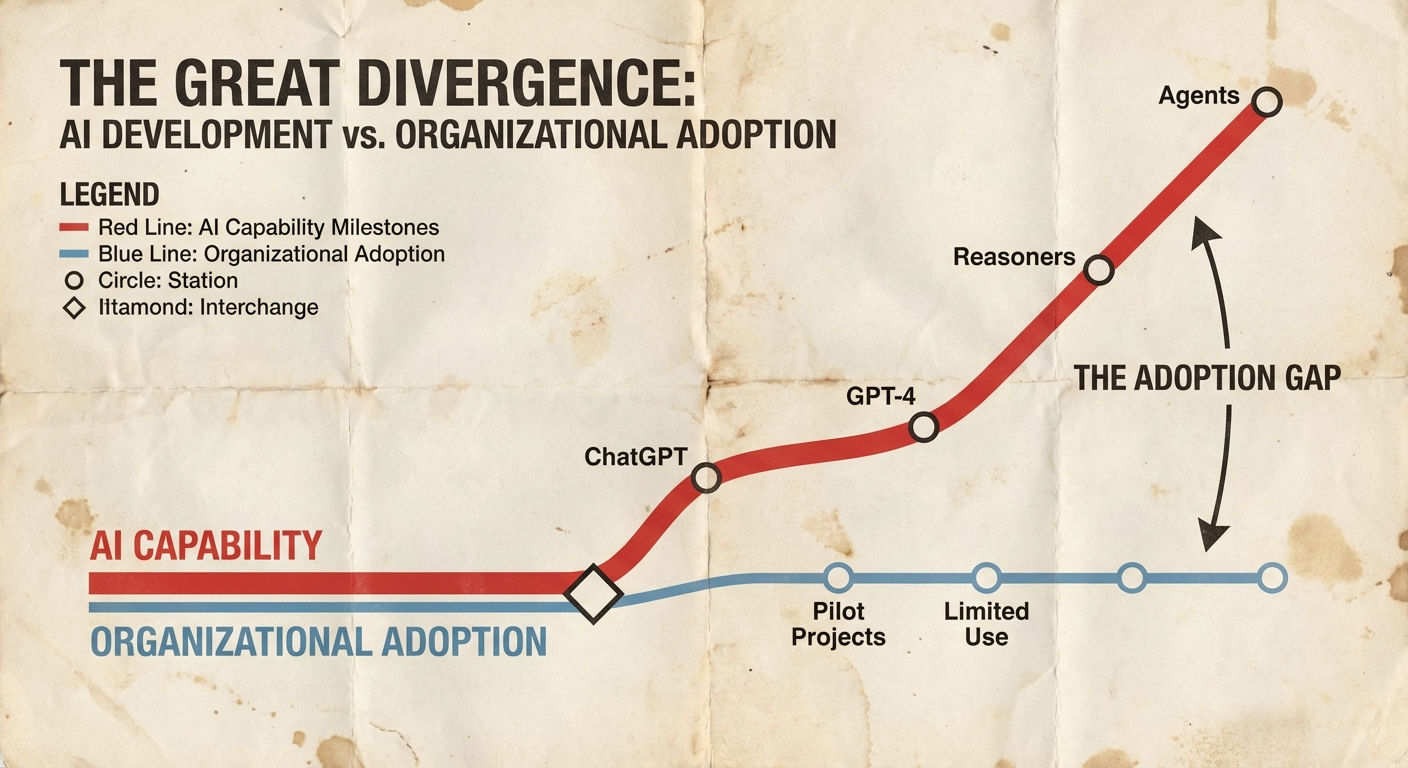

The OpenClaw acquisition by OpenAI wasn't just about code quality or technical architecture. It was about something economists call a "Schelling point" - the solution everyone gravitates to not because it's objectively best, but because it's where everyone else is going.

This distinction matters more than most companies realize when they're trying to pick the right AI tools for their teams.

The Numbers Tell the Story

OpenClaw didn't just grow fast. It blew past established developer favorites in ways that make no sense if you're only looking at technical capabilities. The platform surpassed VS Code in GitHub stars, doubled PyTorch's numbers, and tripled Claude Code's adoption metrics.

VS Code has been the gold standard for code editors for years. PyTorch dominated machine learning frameworks. Yet a relatively new agent platform managed to eclipse both in community engagement.

That's not technical superiority at work. That's network effects.

What Makes a Schelling Point

Game theory defines a Schelling point as the solution people choose when they can't communicate directly about coordination. In practical terms, it's the option that feels inevitable - not because it's perfect, but because it's where everyone expects everyone else to go.

For developer tools, this shows up as ecosystem momentum. The platform that attracts the most developers gets the most plugins, integrations, and community support. Which attracts more developers. The feedback loop accelerates until one option dominates.

OpenClaw hit this inflection point for agent development. Not because it had the best architecture or the most features, but because it created the conditions where developers expected other developers to choose it.

Speed Changes Everything

The technical breakthrough that enabled OpenClaw's momentum was speed at scale. When OpenAI announced GPT 5.3 Codex Spark running at 1,000 tokens per second, they weren't just releasing a faster model. They were changing the fundamental economics of how agents operate.

Traditional coding models made you wait. You'd submit a request, grab coffee, and come back to see what the AI generated. Codex Spark generates 10 pages of code in seconds. That's not just faster - it's a different kind of workflow entirely.

The speed introduces new bottlenecks. As one developer noted, "Suddenly the model can produce 10 pages of code in just a few seconds. It requires a totally new UX to manage." When the AI can outpace human reading speed, the constraint becomes how quickly you can review and integrate generated code, not how long you wait for it.

This is the pattern that creates platform momentum. One technical breakthrough - in this case, inference speed - unlocks new use cases that require different tooling. The tools that get there first establish the patterns everyone else follows.

The Enterprise Decision Framework

This post is based on OpenClaw Goes to OpenAI from AI Daily Brief.